摘要

本文提出了一种基于基于强化搜索蚁群优化的长短期记忆神经网络(ENSACO-LSTM)的数据驱动算法, 用于预测质子交换膜燃料电池(PEMFCs)的功率退化趋势. 首先, 使用沙普利加和解释(SHAP)值方法筛选贡献度高的外特性参数作为数据驱动方法的输入. 接着, 提出了一种新型群体优化算法–强化搜索蚁群优化算法(ENSACO). 该算法基于强化因子改进了蚁群优化(ACO)算法, 以避免早熟并加快收敛速度. 设置了对比实验, 比较粒子群优化算法(PSO)、ACO和 ENSACO的性能差异. 最后, 提出了一种基于ENSACO-LSTM的数据驱动方法来预测PEMFC的功率退化趋势, 并使用实际老化数据对该方法进行了验证. 结果表明, 在有限的迭代次数内, ENSACO的优化能力显著优于PSO和ACO. 此外, ENSACO-LSTM方法的预测精度也大幅提升, 相比LSTM, PSO-LSTM和ACO-LSTM平均提升约50.58%.

Abstract

In this paper, a fusion model based on a long short-term memory (LSTM) neural network and enhanced search ant colony optimization (ENSACO) is proposed to predict the power degradation trend of proton exchange membrane fuel cells (PEMFC). Firstly, the Shapley additive explanations (SHAP) value method is used to select external characteristic parameters with high contributions as inputs for the data-driven approach. Next, a novel swarm optimization algorithm, the enhanced search ant colony optimization, is proposed. This algorithm improves the ant colony optimization (ACO) algorithm based on a reinforcement factor to avoid premature convergence and accelerate the convergence speed. Comparative experiments are set up to compare the performance differences between particle swarm optimization (PSO), ACO, and ENSACO. Finally, a data-driven method based on ENSACO-LSTM is proposed to predict the power degradation trend of PEMFCs. And actual aging data is used to validate the method. The results show that, within a limited number of iterations, the optimization capability of ENSACO is significantly stronger than that of PSO and ACO. Additionally, the prediction accuracy of the ENSACO-LSTM method is greatly improved, with an average increase of approximately 50.58% compared to LSTM, PSO-LSTM, and ACO-LSTM.

1 Introduction

Energy security has gradually become a prominent mainstream topic of international discussion in the 21st century. With continuous societal progress, there is an urgent need to find renewable and clean energy alternatives to mitigate the energy crisis. Hydrogen energy, with its sustainability, high efficiency, and pollutionfree characteristics, has emerged as a highly anticipated energy solution [1] .

Hydrogen fuel cells, as a promising hydrogen energy carrier, provide energy through electrochemical reactions with high safety, high conversion efficiency, and low environmental pollution [2]. They are widely used in portable, stationary, and transport power generation [3-4]. However, issues such as membrane drying, flooding and catalyst poisoning can severely affect performance, causing temporary or permanent degradation, which is extremely unfavorable to the development prospects of proton exchange membrane fuel cells [5-7] . In 2014, Marine Jouin introduced the concept of prognostics and health management (PHM) for fuel cells, emphasizing the importance of life prediction technology [8] . Predicting the remaining useful life (RUL) is crucial for effective management [9-10] . According to the literature [11], the health condition of proton exchange membrane fuel cells (PEMFCs) denotes their capability to sustain adequate power output.

Numerous studies have developed mechanistic, empirical, or semi-empirical degradation models for accurate state-of-degradation predictions. Zhang et al. [12] proposed a physics-based model using a Kalman filter to reveal interactions between operating conditions. Hu et al. [13] created a model based on Shanghai automotive industry corporation (SAIC) and Toyota’s formulas, validated with cumulative time vectors. Pei et al. [14] introduced a life prediction method using a first-order kinetics model and a proportional factor. These modeldriven approaches reduce reliance on operational data, but accurately modeling the physicochemical properties of PEMFCs remains challenging. Pukrushpan [15] developed a comprehensive model for terminal voltage, though many parameters require experiential determination. Semi-empirical models rely heavily on experimental measurements and require adaptation for different fuel cell types.

Due to the complex physical and chemical characteristics of fuel cells, many researchers are turning to data-driven methods for degradation prediction based on actual operational data. Javed et al. [16] introduced a constrained ensemble of connectivity networks to forecast PEMFC performance. Sun et al. [17] integrated convolutional neural networks (CNN) with long shortterm memory (LSTM) networks to predict fuel cell degradation. Morando et al. [18] used echo state networks for performance prediction. Other data-driven methods include relevance vector machines [19] and LSTM algorithms [20] . These approaches primarily rely on high-quality data.

A major challenge of data-driven approaches is selecting appropriate hyperparameters to improve model training and prediction accuracy. For example, the LSTM model solves the long-term dependency problem, but determining hyperparameters such as learning rate and Dropout probability is a tricky problem [21] . Existing studies often rely on experience or trial-and-error [22] . The need for adaptive optimization of hyperparametersis highlighted by [7], which proposed a long short-term memory networks based on particle swarm optimization (PSO-LSTM) method.

This paper proposes an adaptive optimization LSTM method based on enhanced search ant colony algorithm (ENSACO) , which effectively optimizes hyperparameters and enhances the accuracy and generalization of LSTM networks. To expedite hyperparameter optimization, the ant colony (ACO) algorithm is improved by adding a search enhancement factor, enabling rapid identification of near-optimal solutions.

The structure of this paper is organized as follows. Firstly, Section 2 analyzes the external characteristic parameters affecting fuel cell power degradation and performs feature selection. Next, Section 3 presents the implementation principles of the ENSACO algorithm designed in this study and explains the hybrid prediction algorithm formed by combining ENSACO with LSTM. Then, Section 4 handles data processing and discusses the predictive performance of the ENSACO-LSTM hybrid prediction method. Finally, Section 5 summarizes the entire paper.

2 Selection of external characteristic parameters for fuel cells

According to the PEMFCs model established by Pukrushpan [15], it can be seen that the parameters affecting fuel cell power include voltage, current density, stack temperature, relative humidity of the fuel cell, and reactant partial pressures. To improve training performance, input feature selection is required to reduce the training complexity [22] .

Therefore, to evaluate the importance of parameters affecting fuel cell power degradation and to select the parameters with relatively high influence, this paper employs the Shapley additive explanations (SHAP) value method to explain the contribution of each parameter to the prediction of PEMFCs power degradation trends. SHAP value is a feature importance explanation method based on game theory, used to assess the contribution of each feature to the model’s prediction. SHAP values allocate ‘fair’ contributions to each feature, helping to understand the role of each feature in the model’s decision-making process [23-25] .

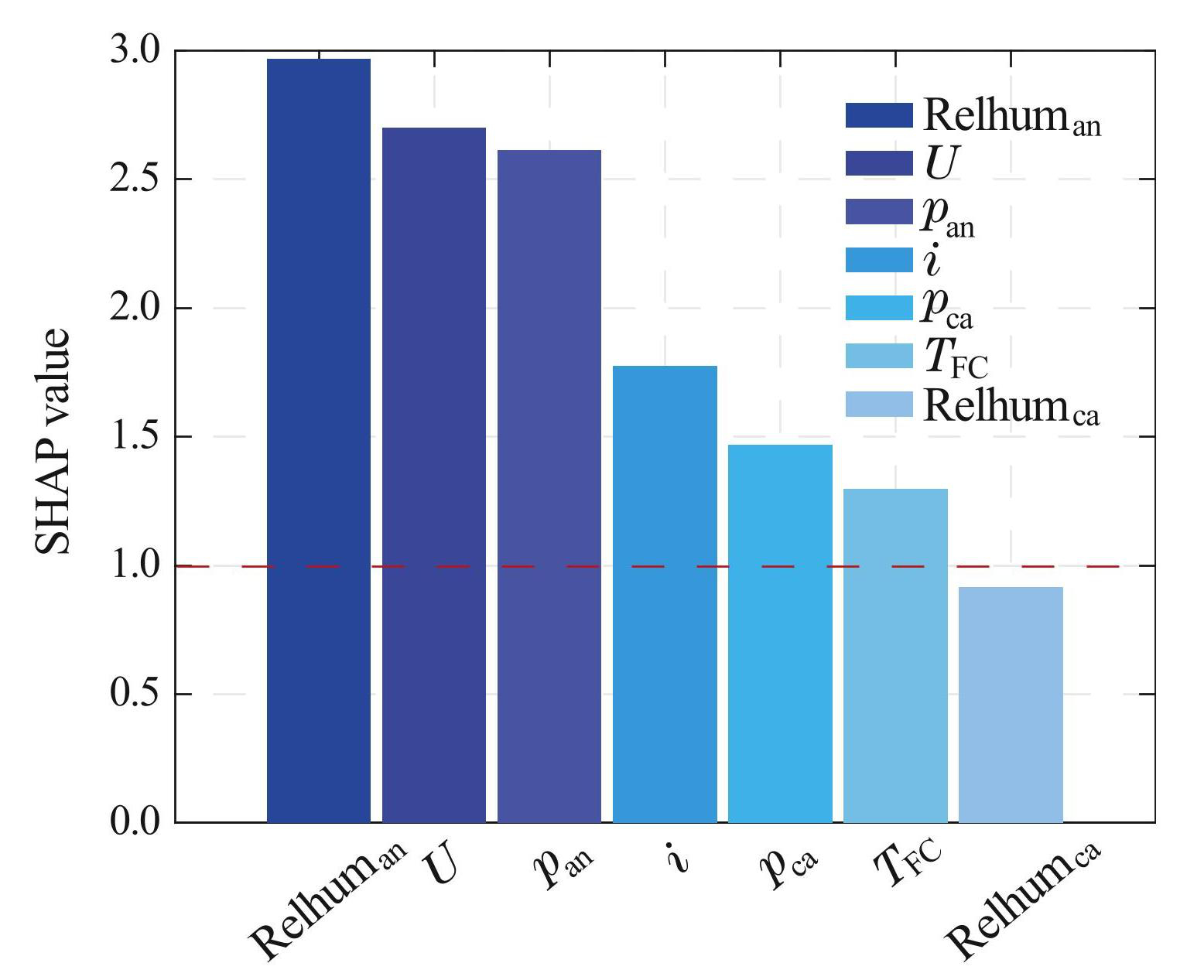

This paper evaluates parameter contributions based on the dataset provided by Xiangyang Daan Automotive Testing Center as mentioned in Section 4.1, as shown in Fig.1. Select the external characteristic parameters with SHAP values greater than 1 as inputs, which are stack voltage Ust, current density i, stack temperature Tst, anode relative humidity Relhuman, hydrogen partial pressure pan, and oxygen partial pressure pca.

3 Degradation model with ENSACO-LSTM architecture

In the previous section, the input parameters for training the data-driven power degradation predictionmodel were selected. In this section, a data-driven method for predicting the power degradation trend of PEMFCs will be designed. First, the ant colony algorithm will be improved to enhance its performance in optimizing LSTM hyperparameters. Then, the ENSACO-LSTM hybrid algorithm will be established, and the operational logic for the method will be designed with a focus on degradation trend prediction.

Fig.1Contributions of various external characteristic parameters to power degradation prediction

3.1 Ant colony algorithm

Compared to the premature convergence of the PSO algorithm, the ant colony algorithm (ACO) enhances communication between solutions through the pheromone mechanism, effectively avoiding premature convergence [26] . The ACO represents solutions using ants, with pheromones serving as a fitness function to guide solution selection. Ants communicate via pheromones, and each ant determines its direction based on pheromone concentration. Pheromones evaporate over time, causing concentrations to increase on shorter paths, which in turn attracts more ants to those paths.

3.2 Enhanced search ant colony algorithm

The ACO algorithm is known for its strong global search capability, distributed computing, and robustness, making it effective for optimization, especially combinatorial problems. However, due to fixed pheromones, transition probabilities, and ant movement settings, the traditional ACO lacks a mechanism to differentiate its operations in the early and later stages of the search, leading to slow convergence. This issue becomes more pronounced as the problem size grows, requiring a large number of iterations to reach an optimal solution. To address the problem, this paper proposes an enhanced search ant colony algorithm to converge quickly within a limited number of iterations.

To enable the ant colony to quickly find an approximate optimal solution that is close to the global optimal solution within a limited number of iterations, this paper introduces a search enhancement factor

(1)

where β is the influence coefficient of the enhancement factor, representing the strength of the factor’s impact on the search, and β ∈ (0, 1]. It is important to note that sinceδ is a monotonically decreasing function, and δ ∈ [1/e, 1) . When g is small, indicating the early stage of the search, δ is at its maximum, δ = 1; when g is large, indicating the later stage of the search, δ is at its minimum, δ = 1/e. This search factor allows the search direction and performance to be adjusted as needed during the search process of the ant colony system.

Based on the enhanced search factor δ, the adjusted ant transition probability PENS is given by

(2)

From Eq. (2) , it can be deduced that in the early stages of the algorithm, the transition probability P is relatively large, resulting in a larger transition probability PENS. This guides the ant colony to perform a global search, thoroughly exploring the solution space. In the later stages of the algorithm, the transition probability PENS becomes smaller, guiding the ant colony to perform a local search. This allows the algorithm to search the neighborhood of near-optimal solutions as much as possible within a limited number of iterations, ensuring the convergence of the algorithm.

For ACO, the pheromone update rule is relatively fixed, which means it cannot guide the ants to find the optimal solution in a short time. Therefore, this paper proposes a more guiding pheromone update method, wherein the fixed pheromone evaporation constant ρ is replaced with a pheromone evaporation function that changes with iterations.

(3)

where φ is the evaporation variation constant, and φ ∈ (0, 1].

Therefore, the ENSACO pheromone update rule is shown in Eq. (4) .

(4)

In the early stages of the search, the pheromone evaporation coefficient ρENS is relatively large, meaning that During pheromone update, τENS, i (g + 1) is significantly influenced by the fitness function value f, resulting in large differences in pheromone levels among individual ants. This larger PENS guides the ant colony to favor global search, actively exploring the solution space. In the later stages of the search, During pheromone update, τENS, i (g + 1) is more influenced by the pheromone levels from the previous generation. After a certain number of iterations, as pheromone continues to evaporate, the differences inpheromone levels among individual ants decrease. The smaller PENS promotes local search by the ant individuals.

Although the traditional ACO’s ant position update rule has a strong global search capability, its overly random search method leads to a slower convergence rate. ENSACO improves the ant position update rule, allowing it to accelerate the convergence speed while retaining the global search capability. To ensure that the ants move more rapidly in the early stages and more precisely in the later stages.

(5)

where η is the ant induction constant, representing the degree to which ants in the global search approach the historical optimal solution; Xhisbest is the historical optimal position reached by the ant colony movement, xhisbest, j is the j-th dimension of the position, r3 is a random number with each element between [0, 1]. And

If an ant moves outside the solution space, boundary handling is performed using the boundary absorption method. That is, when an ant exceeds the boundary, its coordinates are set to the boundary value of the solution space. Additionally, if the fitness function value does not change after the ant’s movement, it is made to perform a local search again.

From Eq. (5) , local search explores the neighborhood of the current coordinates with a random step length, which decreases over time due to the presence of λ. This allows the algorithm to quickly and accurately locate the local optimal solution. In the global search, if the pheromone concentration difference between an ant and the current optimal solution is too large, the ant performs another random global search based on its current position. During this exploration, the ant is also attracted to the historical optimal solution, moving towards it with a random probability.

In the early stages of the search, (1 − λ) → 0, so each ant is relatively independent and not strongly attracted to the historical optimal solution, encouraging global search. This allows the ant colonies to explore the solution space more quickly and widely. In the later stages, (1 − λ) → 1, causing most ants to converge towards the historical optimal solution and explore its neighborhood within a limited number of iterations. This ensures the algorithm finds an approximate optimal solution close to the true optimal. The presence of the random number r3 ensures that some ants will still wander away from the historical optimal solution, preserving the potential for continued exploration.

3.3 ENSACO-LSTM fusion prediction algorithm

The schematic diagram of the ENSACO-LSTM fusion prediction algorithm is shown in Fig.2. And it can be divided into three independent parts. The upper section corresponds to data acquisition and data processing; the second part, located slightly left of the center, is the ENSACO module, which optimizes the LSTM hyperparameters; the third part on the right is the model learning and training process; the bottom section involves prediction and error evaluation. The prediction principle of ENSACO-LSTM can be summarized in 4 steps:

1) The data set is divided into two parts, one for training and the other for prediction, and then the training set is segmtioned again. The resulting training set 1 and training set 2 are used for LSTM simulation training and simulation prediction respectively to optimize hyperparameters based on ENSACO.

2) Initialize the ENSACO algorithm by randomly generating a2-dimensional ant population, the ant representing the initial learning rate and Dropout probability.

3) The LSTM prediction process is simulated and the optimal learning rate and Dropout probability are found in the process.

4) When the termination condition is satisfied, the optimal solution is obtained. Then, degradation prediction is made based on the optimized learning rate and Dropout probability and the final result is output.

In this research, the selected input parameters for the fusion method has been given in Sec.2. In the ENSACO algorithm, the ant consists of the initial learning rate and Dropout probability of the LSTM neural network.

4 PEMFCs power degradation prediction based on ENSACO-LSTM

4.1 Durability tests of PEMFCs

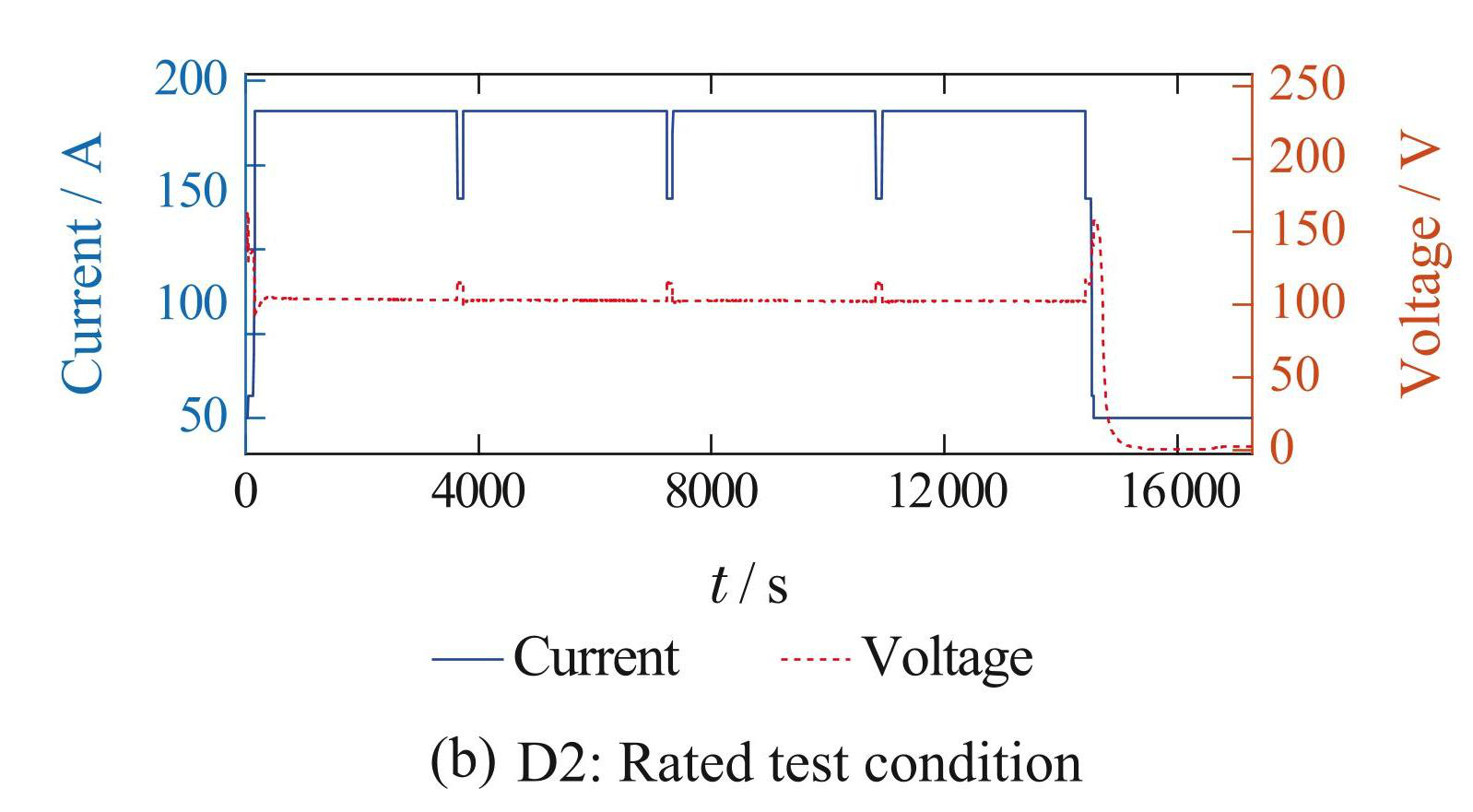

Through collaboration with the Xiangyang Daan Automobile Testing Center, this study obtained an aging dataset from PEMFCs with a rated power of 37 kW after a complete aging test process. The dataset was generated from durability tests conducted in accordance with 2 standards: GB/T38914-2020 and DF2021-1210. The fuel cell is composed of 162 single cells. After data analysis, this study selected 2 datasets from the aging data: the stability assessment condition and the rated test condition (as shown in Fig.3) , as the training and validation datasets for fuel cell performance degradation.

As depicted in Fig.3, the stability assessment condition (DYN 1) runs for 340 hours. The condition spectrum includes: starting and stopping once per hour, 27 load cycles, 21 minutes at idle, and 18 minutes at rated condition.

Fig.2The schematic diagram of the ENSACO-LSTM fusion prediction algorithm

Every 4 hours, the fuel cell stack halts and rests for 1 hour. The rated test condition (DYN 2) lasts for 75 hours in total and involves startup, idle condition, rated current condition, reference current condition, and idle stop condition. A single test cycle, which is shown in Fig.3, completes every 4 hours, followed by a1-hour stop for the fuel cell stack.

Fig.3Fuel cell stack test cycle

After obtaining the original data of the fuel cell, the data is resampled and filtered. A Savitzky-Golay (SG) filter is used to reduce data noise. The SG filter smoothes the original time domain signal based on the local polynomial least squares fit in the time domain. The most important feature of this method is that it can maintain the same signal structure as the original dataafter filtering. The SG filter is set with a polynomial order of 2 and a filter window length of 11 [22].

After data processing, PEMFCs power degradation data used for method validation can be obtained, with the stability assessment condition data set (D1) shown in Fig.4. The rated test condition set (D2) is processed in the same manner and will not be introduced additionally.

Fig.4Savitzky-Golay filter filtered data

4.2 Degradation prediction results

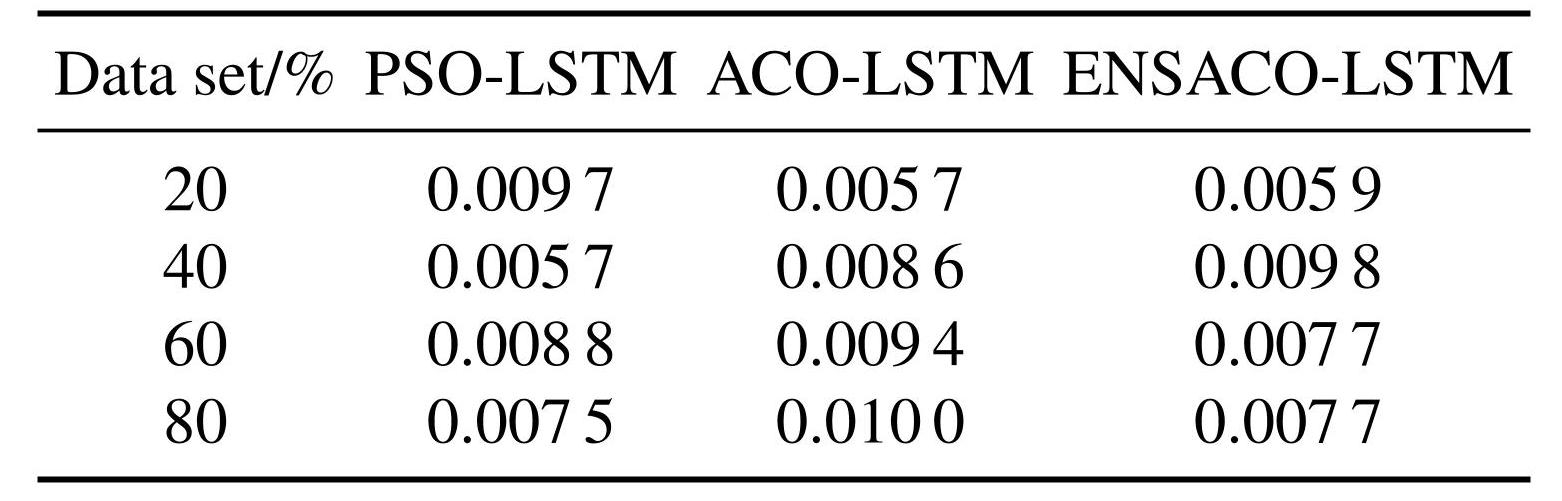

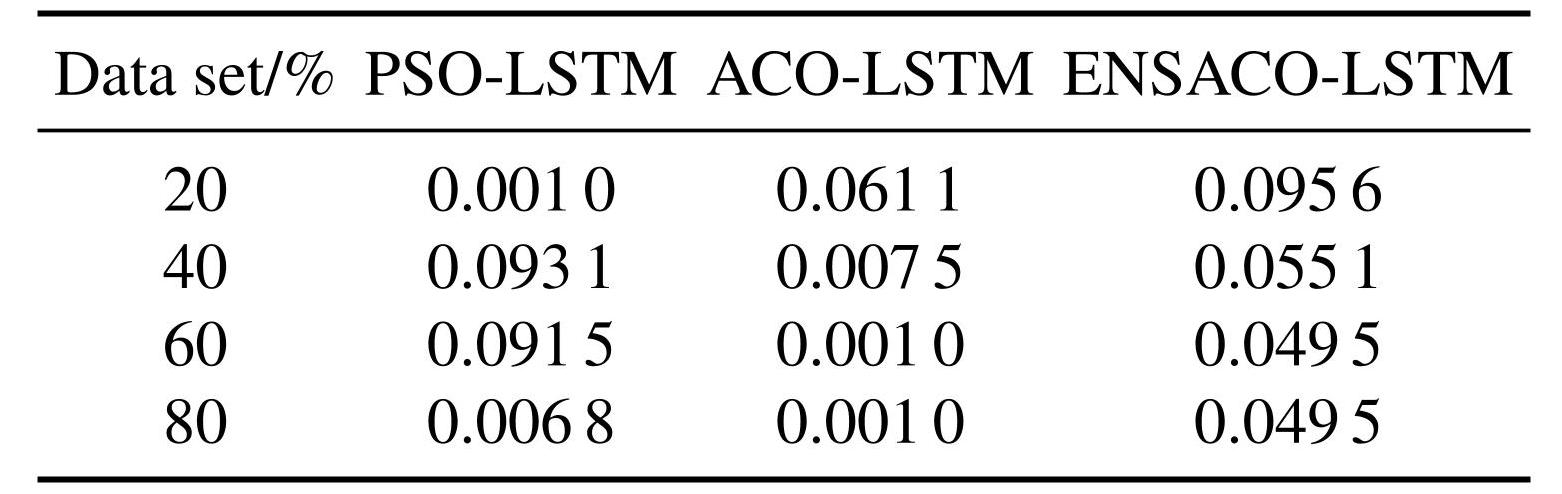

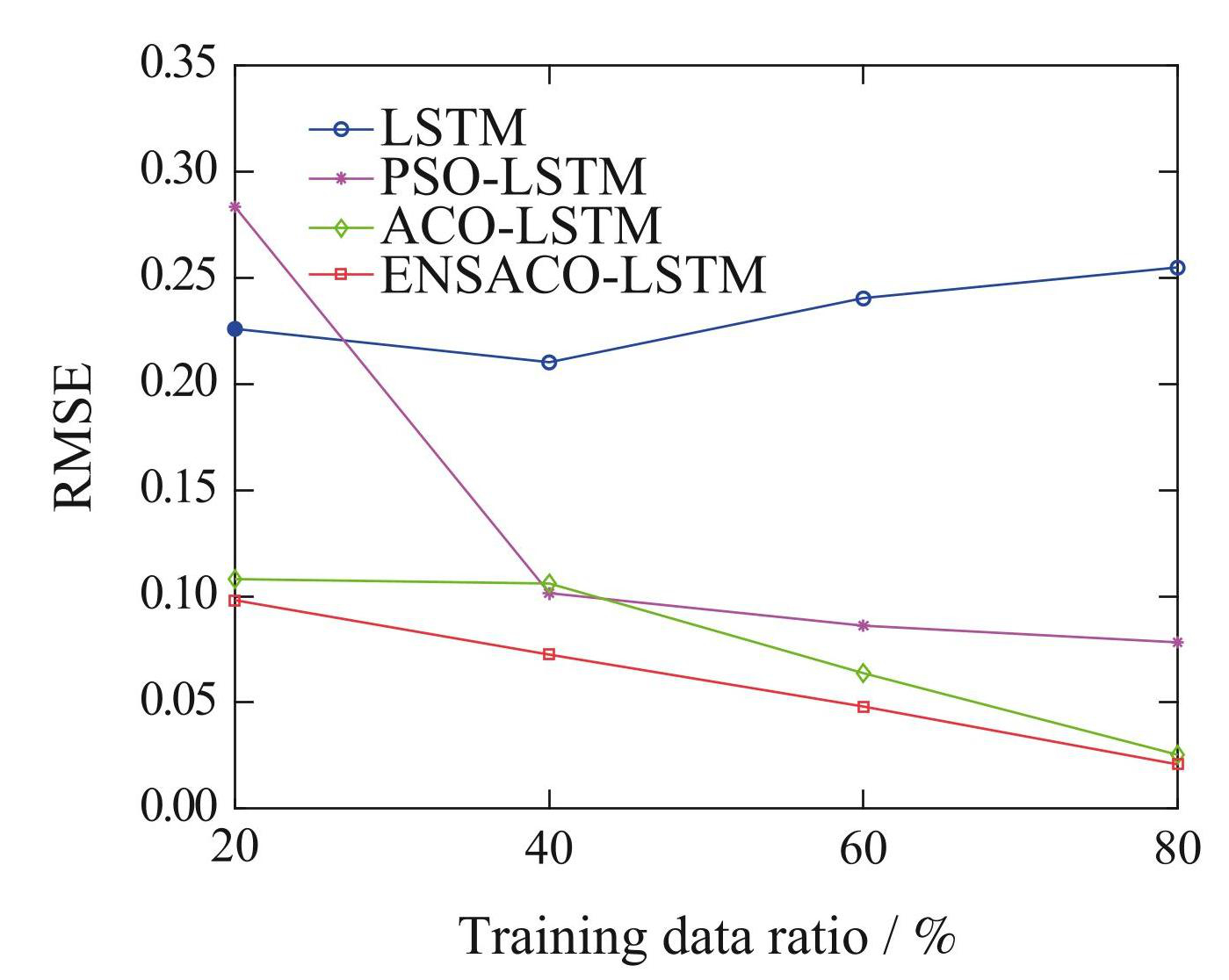

In this section, the D1 (stability assessment condition) dataset is used for training and prediction testing, while the D2 (rated test condition) dataset is used for generalization verification. To evaluate the performance of the ENSACO-LSTM fusion algorithm, 4 comparative experiments are conducted using LSTM, PSOLSTM, ACO-LSTM, and ENSACO-LSTM. All methods are implemented using CPU computation. The LSTM architecture in all4 experiments consists of an input layer, LSTM layer, fully connected layer, Dropout layer, and regression layer, with the Adam solver employed over 150 iterations. The Dropout technique prevents overfitting by randomly dropping neurons [27] . Training data amounts are set to 20%, 40%, 60%, and 80% of the total dataset. In the first experiment, as recommended in [22], the learning rate is set to 0.01, and the Dropout probability to 0.5. The parameter settings for all experiments are summarized in Table1. The root mean square error (RMSE) is used to assess the prediction performance of the degradation model.

Table1Comparison of parameter settings between methods

In this study, based on the IEEE PHM 2014 Data Challenge [11], the health index of the fuel cell is defined as the stack’s capability to consistently provide sufficient power output. Thus, peak power attenuation is utilized to indicate the health index of the fuel cell stack.

In the Fig.5, the gray curve represents the actual power of the PEMFCs, the blue curve shows the prediction by the LSTM method, the purple curve depicts the prediction results from the PSO-LSTM method, the green curve illustrates the ACO-LSTM method’s predictions, and the red curve indicates the predictions from the ENSACO-LSTM method. Tables 2–3 display the learning rates and Dropout probabilities determined through adaptive optimization for each method. Furthermore, the learning rate and Dropout probability for the LSTM method are consistently set at 0.01 and 0.5, respectively, across all4 training data proportions.

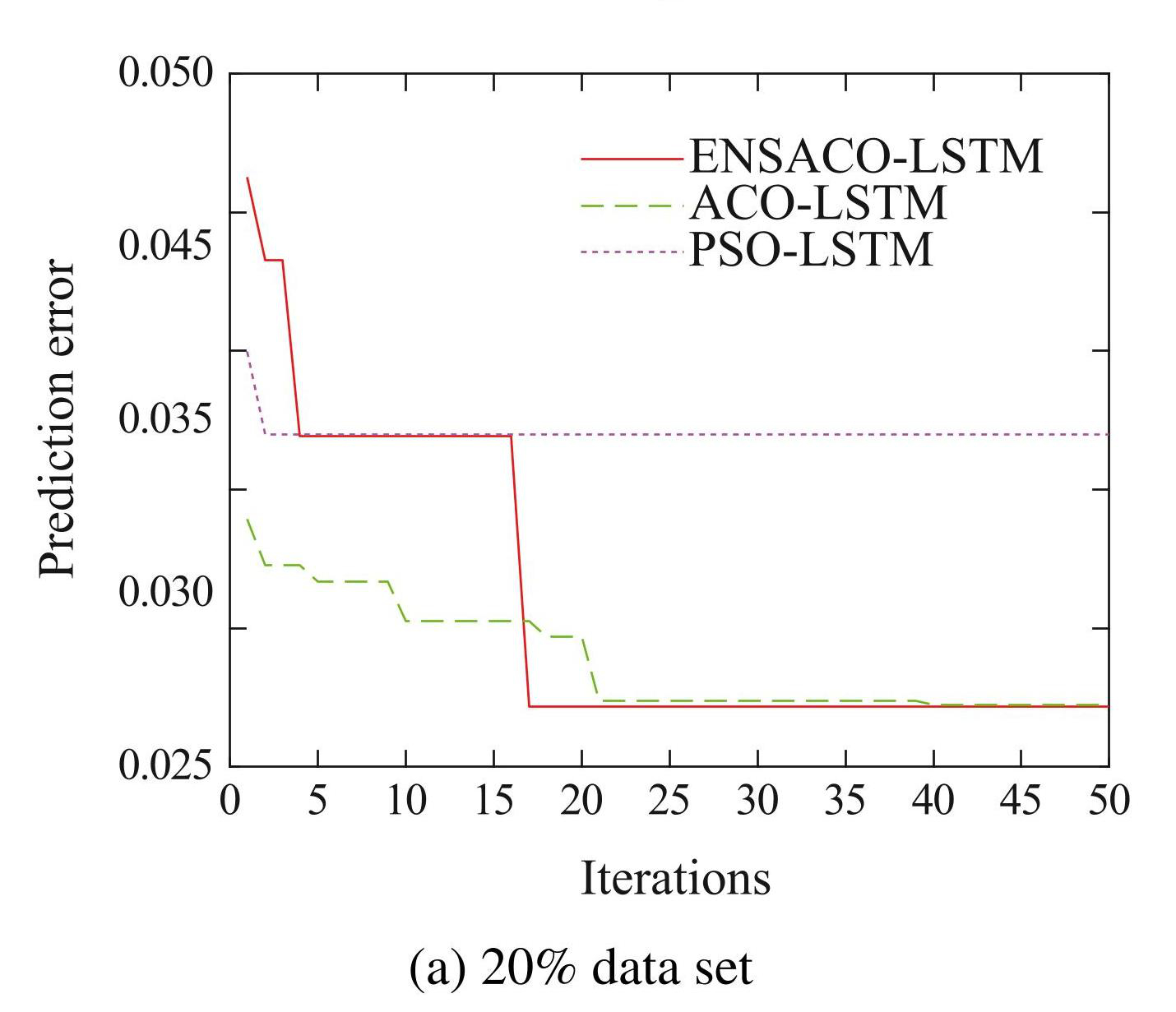

As shown in Fig.5 (a) , the PSO-LSTM exhibits significant overfitting during the prediction phase. According to Table3, the Dropout probability optimized by PSO is too low, leading to overfitting. As shown in Fig.6 (a) , the premature convergence of PSO can be seen. In the20% D1 prediction, the ENSACO method under unfavorable initial conditions of the ant colony (initial RMSE = 0.046 3) , quickly converging to RMSE = 0.027 2 within 17 generations. In contrast, the ACO method, despite having a better initial position (initial RMSE = 0.033 9) , took 40 generations to converge from a prediction error RMSE = 0.033 9 to RMSE = 0.027 3.

As shown in Fig.5 (c) , in the60% D1 experiment, the prediction results of the PSO-LSTM, ACO-LSTM, and ENSACO-LSTM methods do not differ significantly. As shown in Fig.6 (c) , it can be seen that in this experiment, the PSO method did not exhibit premature convergence, successfully escaping2 local optima and continuing to explore. Thus, the premature convergence of PSO occurs with a certain probability. On the oth-er hand, Given similar initial solution positions, ENSACO found a solution with RMSE = 0.037 1 within 22 generations, whereas the ACO method found a solution with RMSE = 0.038 0 by the35th generation. Therefore, within a limited number of iterations, especially when the number of iterations is small, the optimization capability of ENSACO is superior to that of the ACO method.

Fig.5Degradation prediction performance of different methods

Table2Learning rate obtained by different optimization methods

Table3Dropout probability obtained by different optimization methods

As seen in Fig.6, when the total optimization iterations are set to 50, ENSACO almost always finds a near-optimal solution within 25 generations. However, in Fig.6 (d) , ENSACO still escapes three local optima after 25 iterations, ultimately finding a near-optimal solution with RMSE = 0.027 4. This demonstrates the necessity of retaining a certain level of randomness in the algorithm. Therefore, with appropriate parameter settings, the ENSACO method can also perform well in application scenarios with a larger number of iterations.

Fig.6Convergence of different optimization methods

As shown in Fig.7, using the hyperparameters recommended in [22], LSTM fails to meet the learning and training needs of the D1 dataset. The ENSACOLSTM method exhibits excellent predictive performance across all data proportions. Within the constraint of 50 iterations, it not only converges faster, but also performs remarkably well in global optimization. Overall, it achieves the lowest prediction error among the4 methods.

4.3 Verification of ENSACO-LSTM method

The prediction model net, trained using the ENSACO-LSTM method with 80% of the D1 dataset, is employed to predict the D2 dataset, as shown in Fig.8.The ENSACO-LSTM accurately predicted the subsequent degradation trend. Throughout the entire prediction phase of D2, the error between the target and the output mostly remained within ±0.2 kW, with an average prediction error of ± 0.10%, indicating that the network’s generalization capability is acceptable.

Fig.7Comparison of prediction errors of different methods

Fig.8Prediction results of ENSACO-LSTM training model under 80% data ratio

5 Conclusion

This paper introduces a data-driven method based on ENSACO-LSTM, utilizing the improved swarm optimization algorithm ENSACO to adaptively optimize the hyperparameters of the neural network. This approach aims to achieve effective learning and accurate prediction across different datasets and data volumes. The main contributions of this study are as follows:

1) The SHAP value method was employed to select the external characteristic parameters that contribute more significantly to power degradation prediction, which were then used as inputs for the data-driven method.

2) This paper designed a novel ENSACO algorithm, which improves the slow convergence issue of the traditional ACO algorithm based on the reinforcement factor δ.

3) In the study, a novel data-driven method for predicting the power degradation trend of PEMFCs is introduced, called ENSACO-LSTM. The ENSACO is employed to adaptively and quickly optimize the hyperparameters of LSTM.

The ENSACO-LSTM method excels in predicting the power degradation trend of PEMFCs. However, while the ENSACO method tends to explore the vicinity of the historical optimal solution in the later stages of the algorithm. Therefore, when a large number of iterations are allowed, the ACO maybe better. The prediction method discussed in this paper is based on one-step prediction, and in future work, the authors will further study the application of multi-step prediction in fuel cell performance degradation.